DOCUPIPE

Solutions

Resources

Pricing

Processing Massive Documents: Splitting & Classification

Nitai Dean

Updated Mar 30th, 2026 · 12 min read

Table of Contents

Processing Massive Documents: Splitting & Classification

Enterprise document processing rarely involves clean, single-document inputs. The reality is far messier.

A government records request returns a 1,200-page PDF containing contracts, memos, spreadsheets, and handwritten notes concatenated into a single file. A healthcare system receives faxed documents where multiple patient records are scanned together. A legal discovery process produces terabytes of mixed-format archives where document boundaries are unknown.

These massive files break standard document AI systems immediately. AI context limits overflow. Context is lost between pages. Extraction quality collapses because models cannot determine where one document ends and another begins.

What You Need to Know

The problem: 1,000-page PDFs exceed LLM context limits. Even with large context windows, quality degrades - models lose structure, confuse sections, hallucinate connections.

Why it's hard: Concatenated documents have no boundary markers. Where does the contract end and the correspondence begin? Only intelligent analysis can tell.

What works: Layout graphs capture visual and logical structure. Boundary detection finds document splits. Semantic chunking keeps tables and sections intact. Classification routes each chunk to specialized processing.

Bottom line: You can't feed massive files to AI and expect good results. You need to decompose them intelligently first.

For the broader context on enterprise document AI infrastructure, see the Enterprise Document AI Infrastructure hub article.

Why 1,000-Page PDFs Break Standard OCR and AI Systems

The architecture of most document AI systems assumes bounded inputs. Upload a document, process it as a unit, return results. This assumption fails catastrophically for massive concatenated documents.

AI Context Limits

AI models can only process a limited amount of text at once. Even models advertising large context windows degrade in quality as document length increases. A recent study demonstrated that models answering simple questions correctly with minimal context began making errors when the same questions were embedded in 25,000 tokens of irrelevant content.

For document processing, this degradation is fatal. A 1,000-page document easily exceeds 500,000 tokens. Feeding the entire document to a model, even one with a large context window, produces unreliable extractions. The model loses track of document structure, confuses content from different sections, and hallucinates connections that do not exist.

Boundary Ambiguity

When multiple documents are concatenated, the boundaries between them are not marked. A PDF might contain:

- Pages 1-47: A construction contract

- Pages 48-52: Amendment to the contract

- Pages 53-89: Correspondence about the project

- Pages 90-91: An unrelated invoice accidentally included

- Pages 92-156: Technical specifications

- Pages 157-203: More correspondence

Without explicit boundary markers, how does a system know where each document begins and ends? Page numbers reset. Headers change. Formatting varies. The only reliable approach is intelligent analysis of content and structure.

Context Loss Across Pages

Even within a single logical document, basic page-by-page processing loses critical context. A table that spans pages 34-37 cannot be extracted correctly if each page is processed independently. A paragraph that continues across a page break will be split into fragments.

The challenge is maintaining context awareness while keeping processing units small enough for reliable extraction. This requires understanding document structure at a level that page boundaries do not provide.

Semantic Chunking and Layout Graphs

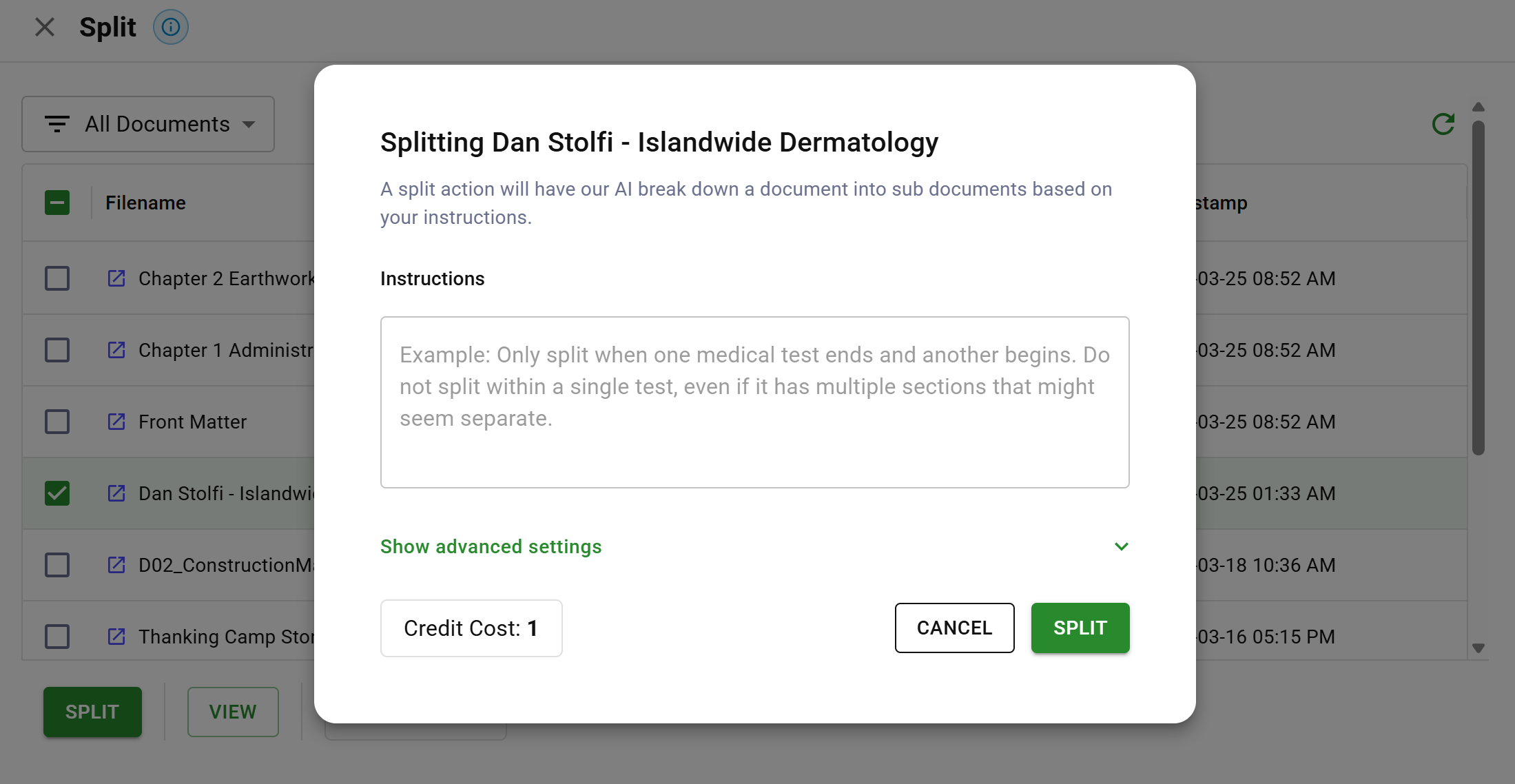

DocuPipe split interface showing AI-powered document decomposition with semantic instructions

DocuPipe split interface showing AI-powered document decomposition with semantic instructionsIntelligent document decomposition requires understanding what is on each page and how pages relate to each other. This understanding comes from building a layout graph that represents structural relationships.

Building the Layout Graph

A layout graph captures the visual and logical structure of a document. For each page, the graph records:

Visual elements:

- Text blocks with positions and boundaries

- Tables with cell structures and spans

- Headers and footers with their content

- Images and figures with captions

- Handwritten annotations with regions

Structural relationships:

- Which text blocks form paragraphs

- Which paragraphs form sections

- Which sections form documents

- Which tables span multiple pages

- Which headers apply to which content

Reading order:

- Left-to-right vs. right-to-left text direction

- Column structures and reading sequences

- Footnotes and their reference points

- Sidebar content and its relationship to main text

Building this graph requires more than OCR. It requires layout analysis that understands document conventions: how contracts are structured, how correspondence flows, how technical documents organize information.

Detecting Document Boundaries

With a layout graph in place, document boundaries become detectable through pattern analysis:

Explicit boundary indicators:

- New document headers or titles

- Page number resets (back to page 1)

- Dramatic formatting changes

- Cover pages or title pages

- Document end markers or signatures

Implicit boundary indicators:

- Topic discontinuity between adjacent content

- Change in writing style or vocabulary

- Different paper sizes or orientations

- Inconsistent header/footer patterns

- Gaps in date sequences

The system analyzes these indicators together. No single indicator is definitive, but the combination of multiple indicators produces reliable boundary detection.

Semantic Chunking Strategies

Once boundaries are detected, the massive file is split into logical documents. Within each document, further chunking may be required if the document itself is too large for single-pass processing.

Semantic chunking respects document structure:

Section-aware chunking:

- Split at section boundaries rather than arbitrary positions

- Keep related content together (a section header with its content)

- Preserve hierarchical relationships (subsections within sections)

Table-aware chunking:

- Never split within a table row

- Keep multi-page tables together when possible

- If splitting is required, duplicate headers in each chunk

Cross-reference preservation:

- Footnotes linked to their reference points

- Internal document references maintained

- Table of contents links preserved

- Citation relationships tracked across splits

Paragraph-aware chunking:

- Never split within a sentence

- Keep paragraphs intact when possible

- Maintain paragraph boundaries for context

Context overlap:

- Include trailing context from previous chunks

- Include leading context for subsequent chunks

- Enable the model to understand chunk position within the whole

The goal is chunks that are internally coherent and independently processable while maintaining enough context to understand their role in the larger document.

Process your massive document archives.

Classification and Routing

After chunking, each chunk must be routed to appropriate processing. A chunk containing dense tabular data requires different handling than a chunk of narrative text. A chunk with handwritten annotations needs different capabilities than printed forms.

Multi-Dimensional Classification

Document classification is not binary. A single chunk may have multiple relevant classifications:

Document type:

- Contract, correspondence, invoice, form, report, specification

- Determines which extraction schema to apply

Content characteristics:

- Primarily text, primarily tabular, mixed content

- Determines which processing mode to use

Quality indicators:

- Print quality (clear, faded, degraded)

- Scan quality (straight, skewed, noisy)

- Determines preprocessing requirements

Special handling:

- Contains handwriting

- Contains signatures

- Contains checkboxes or form fields

- Requires specialized models

Confidence-Based Routing

Classification produces confidence scores for each category. These scores drive routing decisions:

High confidence (90%+):

- Route directly to specialized processing

- Apply the identified schema without human verification

- Process at full throughput

Medium confidence (70-90%):

- Route to specialized processing with flagging

- Apply the identified schema but mark for potential review

- Continue processing but track for quality monitoring

Low confidence (below 70%):

- Route to human classification

- Present options with confidence scores

- Wait for human decision before proceeding

Multiple high-confidence classifications:

- May indicate a mixed-content chunk

- Consider further splitting

- Or apply multiple schemas and merge results

Routing to Specialist Models

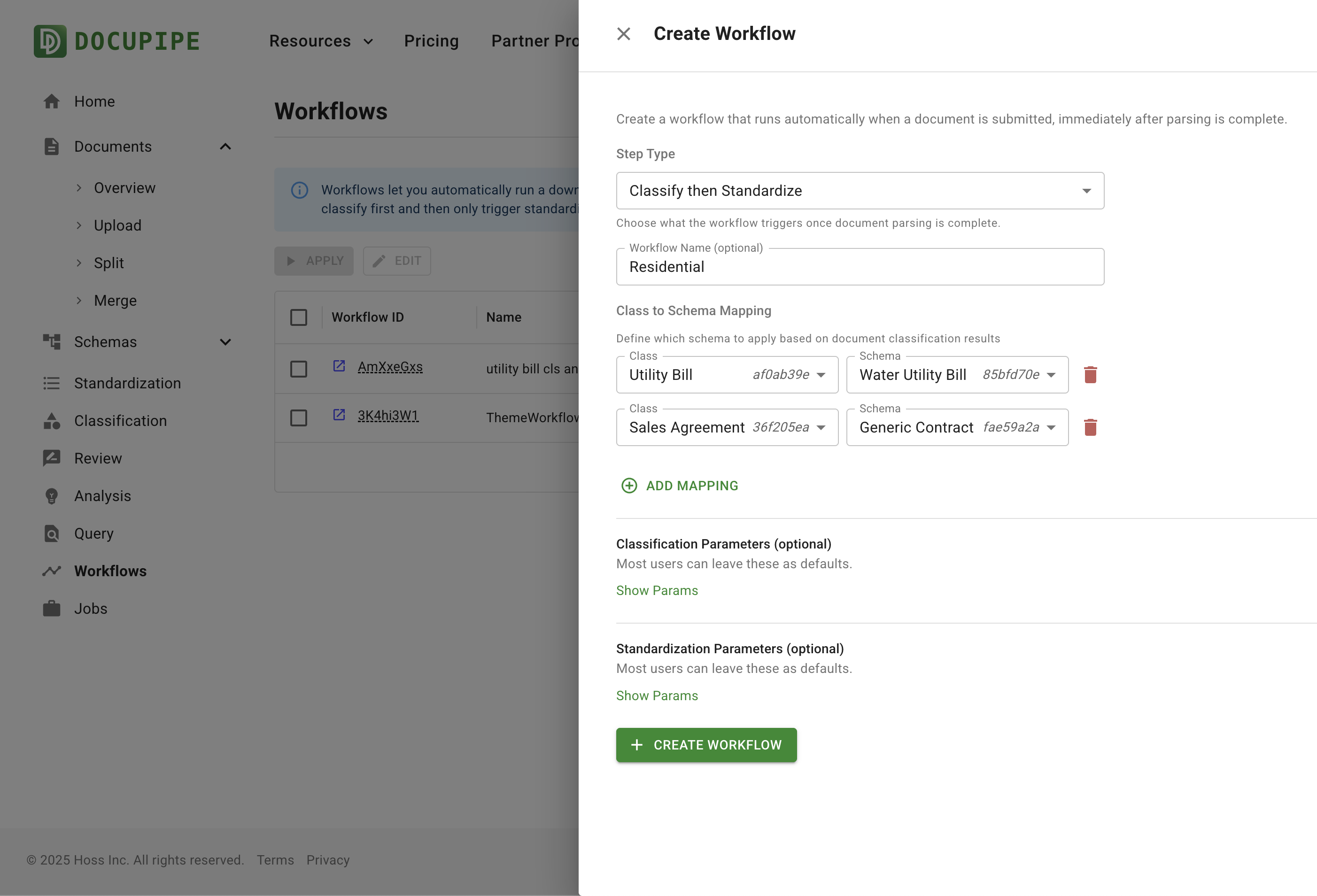

Workflow showing classification to schema routing

Workflow showing classification to schema routingDifferent content types benefit from different processing approaches:

Dense tabular content:

- Route to table-optimized extraction

- Use cell-level parsing with header detection

- Apply table-specific validation rules

Narrative text:

- Route to high-throughput text extraction

- Use standard schema application

- Optimize for speed over structure preservation

Handwritten content:

- Route to multimodal processing with image input

- Use vision-language models for interpretation

- Apply lower confidence thresholds given inherent difficulty

Form fields:

- Route to form-specific extraction

- Use field detection and checkbox recognition

- Apply form-specific validation rules

Mixed content:

- Decompose into regions

- Route each region to appropriate specialist

- Merge results with positional alignment

Feedback and Learning

Classification improves through operational feedback:

Correction tracking:

- When humans override classifications, record the correction

- Analyze patterns in corrections

- Identify systematic classification errors

Confidence calibration:

- Compare confidence scores to actual accuracy

- Adjust thresholds based on observed performance

- Recalibrate when document populations change

Model updates:

- Retrain classification models on accumulated examples

- Test new models against historical data

- Deploy updates with controlled rollouts

The classification system becomes more accurate over time as it learns the specific document types and characteristics present in each organization's document flow.

Implementation Patterns

Processing massive documents requires decisions about orchestration, parallelism, and failure handling.

Orchestration Architecture

The processing pipeline for large documents follows this sequence:

- Ingestion: Accept the large document and validate format

- Layout analysis: Build the layout graph for all pages

- Boundary detection: Identify document boundaries

- Splitting: Create logical sub-documents

- Classification: Classify each sub-document

- Routing: Direct each sub-document to appropriate processing

- Extraction: Execute extraction with specialized models

- Validation: Validate extraction results against schemas

- Aggregation: Combine results into unified output

- Delivery: Return results with full provenance

Each step may fail independently. The orchestration layer tracks progress and handles failures gracefully.

Parallel Processing

Large document processing benefits from parallelism at multiple levels:

Page-level parallelism:

- Layout analysis can process pages concurrently

- OCR can run on multiple pages simultaneously

- Initial feature extraction is embarrassingly parallel

Document-level parallelism:

- After splitting, sub-documents are independent

- Each sub-document can process concurrently

- Results are aggregated after all complete

Model-level parallelism:

- Multiple model instances can process different chunks

- Specialist models run on their assigned content types

- Load balancing distributes work across resources

Parallelism dramatically reduces processing time for large documents. A 1,000-page document that would take hours in serial processing completes in minutes with appropriate parallelization.

Failure Handling

Large documents have high failure potential. Any of a thousand pages might contain content that breaks processing. Production systems must handle failures gracefully:

Partial success:

- Process what can be processed

- Clearly mark failed sections

- Return partial results with failure annotations

Retry logic:

- Retry failed chunks with alternative approaches

- Try different models if one fails

- Escalate to human processing after retry exhaustion

Graceful degradation:

- If classification fails, use generic processing

- If extraction fails, return raw text with positions

- Always return something useful rather than nothing

Progress persistence:

- Checkpoint progress through the pipeline

- Resume from checkpoints after system failures

- Avoid reprocessing successfully completed sections

These patterns ensure that large document processing is reliable enough for production use, even when individual pages or sections present challenges.

Key Takeaways

- Context windows have hard limits - even 1M token windows degrade on 1,000-page documents; quality collapses long before you hit the limit

- Concatenated docs need boundary detection - page number resets, header changes, topic discontinuity all signal document boundaries

- Semantic chunking respects structure - split at sections, never mid-table, keep paragraphs intact, overlap context between chunks

- Classification drives routing - high confidence (90%+) goes direct, medium (70-90%) gets flagged, low triggers human classification

- Parallelism makes it fast - pages, documents, and models can all parallelize; what would take hours in serial completes in minutes

Turn massive PDFs into structured data.

LLMs have finite context windows that degrade in quality as length increases. A 1,000-page document can exceed 500,000 tokens, causing models to lose track of structure, confuse content from different sections, and hallucinate connections. Additionally, concatenated documents lack boundary markers, making it impossible to know where one document ends and another begins.

Semantic chunking splits documents at logical boundaries rather than arbitrary positions. It respects section headers, keeps tables intact, preserves paragraph boundaries, and maintains context overlap between chunks. This ensures each chunk is internally coherent and independently processable while understanding its role in the larger document.

Each chunk receives classification scores across multiple dimensions: document type, content characteristics, quality indicators, and special handling requirements. High-confidence classifications (90%+) route directly to specialized processing. Medium confidence (70-90%) proceeds with flagging. Low confidence (below 70%) routes to human classification before proceeding.

Recommended Articles

Related Documents

Related documents:

Related documents:

RIB

CT-e

Non-Disclosure Agreement

Construction Contract

BAS

Check

Resume

Invoice

NDA

SAF-T

Rent Roll

Term Sheet

ASIC Extract

Change Order

Delivery Note

+